How can we compute basis for Nullspace?

There are at least two answers to this question; one of these is more educative and the other one is at least as educative (in a different and profound way) as well as practical.

Method 1

The first method is a more introductory level method. It is helpful to know it and good to read it as a refresher even if one is more advanced student of the topic.

A linear system $\mathbf{Ax=b}$ is homogeneous when $\mathbf b=\mathbf 0$ and nonhomogeneous when $\mathbf b \neq \mathbf 0$. Assuming that the system is consistent (i.e., it is solvable for the given $\mathbf b$), the solution $\mathbf x$ can be expressed in terms of basic and free variables as (see eq. (2.5.7) of C.D.Meyer):

\begin{equation}

\mathbf x = \mathbf p + x_{f_1}\mathbf h_1 + x_{f_2} \mathbf h_2 + \dots + x_{f_n-r} \mathbf h_{n-r}. \,\,\,\,\, (*)

\label{eq:general_solution}

\end{equation}

While there are many ways of picking the free variables, the convention is to always solve for the unknowns corresponding to pivotal positions (p58); i.e., basic variables are the variables corresponding to basic columns, and the rest are the free variables. The process of “solving for unknowns corresponding to pivotal positions” is best explained over an example as below.

Let $\mathbf A$ be the matrix with echelon form $\mathbf E$ as (see p57) $$\mathbf A = \begin{pmatrix}

1 & 2 & 2 & 3 \\ 2 &4 &1 &3 \\ 3 & 6 & 1 & 4 \end{pmatrix}\,\,\,\,\,\mathbf E=\begin{pmatrix}

1 & 2 & 2 & 3\\0 & 0 & -3 & -3\\ 0 & 0 & 0 & 0\end{pmatrix}.$$

Here the basic columns are the 1st and the 3rd ones (because they contain the pivots), there fore the basic variables are $x_1$ and $x_3$, whereas $x_2$ and $x_4$ are the free variables. The original homogeneous system $\mathbf {Ax=0}$ is equivalent to solving the reduced system, which can be written out as

$$x_1+ 2x_2 + 2x_3 + 3x_4 = 0$$ $$-3x_3 – 3x_4 = 0. $$

Solving for $x_3$, we see that $$x_3=-x_4.$$ Then, solving for the other basic variable, namely $x_1$, we get

$$ x_1 = -2x_2 – 2x_3 -3x_4 = -2x_2 -x_4.$$

Now we can write the general solution and bring it to the form in $(*)$ as

\begin{equation}

\mathbf x = \begin{pmatrix}

x_1 \\ x_2 \\ x_3 \\ x_4

\end{pmatrix} = \begin{pmatrix}

-2 x_2 – x_4 \\ x_2 \\ -x_4 \\ x_4

\end{pmatrix} = x_2 \begin{pmatrix}

-2 \\ 1 \\ 0 \\0

\end{pmatrix} + x_4 \begin{pmatrix}

-1 \\ 0 \\ -1 \\ 1

\end{pmatrix}.

\end{equation}

Thus, the $\mathbf h_1$ and $\mathbf h_2$ of the form in $(*)$ are identified as $\mathbf h_1 = (-2, 1, 0,0)^T$ and $\mathbf h_2 = (-1, 0, -1, 1)^T$. This means that, any linear combination of the vectors $\mathbf h_1$ and $\mathbf h_2$ solves the system $\mathbf{Ax=0}$. In other words, we have found a \textit{\textbf{basis

} for the nullspace of $\mathbf A$}:

$$\mathcal{N}(\mathbf A) = \text{span}(\mathbf h_1, \mathbf h_2).$$

Method 2

The second method is more interesting for at least two reasons. First, it is highly educative, because studying it allows one to solidify fundamental concepts about linear algebra, as you’ll hopefully see below. Secondly, it can be used for actual calculations, too. By the end of the description there will be a jupyter link as well.

Before we go on with the description of the method, it is useful to list the topics used in this description:

- QR decomposition

- Orthogonal decomposition

- Fundamental theorem of linear algebra

(i.e., Orthogonal decomposition theorem)

The method directly relies on the concept of (orthogonal) complementary subspaces. It is based on the fact that, for any matrix, $\mathbf A$ with rank $r$, there are orthogonal matrices $\mathbf U$ and $\mathbf V$ and a nonsingular matrix $\mathbf C$ such that

$$\mathbf{A=URV}^T = \mathbf U \begin{pmatrix}\mathbf C & \mathbf 0 \\ \mathbf 0 & \mathbf 0\end{pmatrix} \mathbf V^T,$$ such that

- the first $r$ columns of $\mathbf U$ are an orthonormal basis for $R(\mathbf A)$

- remaining columns of $\mathbf U$ are an orthonormal basis for $N(\mathbf A^T)$

- the first $r$ columns in $\mathbf V$ are an orthonormal basis for $R(\mathbf A^T)$

- remaining columns of $\mathbf V$ are an orthonormal basis for $N(\mathbf A)$

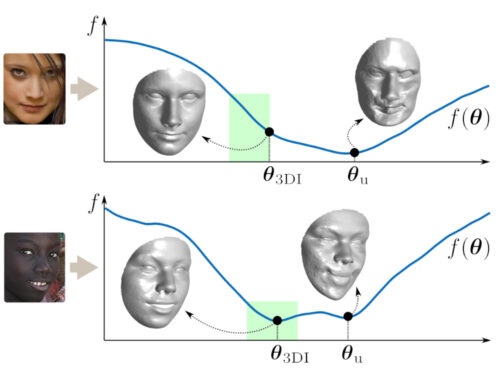

This is an extremely powerful theorem, particularly because it is not only a theoretical result — it can be easily operationalized through the QR decomposition, as we describe below. (In fact even the theoretical implication of this decomposition are quite significant, e.g., see this post). Before we operationalize this, let’s draw what this looks like. We want the picture carved into our minds.

Now on to the question of how do we actually compute this transformation, which will directly answer the question set forth in the title of this article. That is, if we find the matrix $\mathbf V$, we simply take the last $n-r$ columns and they will give our basis.

First of all, it is important to realize that URV is not a unique decomposition (which can be a strength), and we’ll explain only one way to compute it, but one that is computationally efficient. (Technically SVD decomposition can also be used, but that would be unnecessarily complex if all that we care is having bases for the fundamental subspaces of the matrix). Our computation will rely on the QR decomposition (see p407 of C.D.Meyer).

That the QR decomposition provides the $\mathbf U$ matrix of the decomposition is very obvious, because its whole point is to extract an orthonormal set of bases from a given matrix $\mathbf A$ as well as an upper triangular coefficient matrix. We will compute URV factorization simply by applying QR decomposition twice as follows.

First, apply QR directly on $\mathbf A$ as $$\mathbf{A=Q}_1 \mathbf R_1.$$ Note that what we apply here is the full QR decomposition; i.e., the $\mathbf R_1$ that results will possibly have zero rows that emerge due to $\mathbf A$ not being full rank. The $\mathbf Q_1$ that emerges here is the $\mathbf U$ that we are looking for, and it contains a basis for $R(\mathbf A)$ as well as $N(\mathbf A^T)$. Now we need to find bases for the other pair of complementary subspaces induced by $\mathbf A$, namely $R(\mathbf A^T)$ and $N(\mathbf A)$.

Before we proceed, it’s helpful and educative to recall the concept of (row) equivalent matrices: Two matrices are row equivalent if one can be obtained by left-multiplying the other one with a nonsingular matrix. Thus, it becomes clear that $\mathbf R_1$ and $\mathbf A$ are row equivalent. It is helpful to realize this, because we realize that all the information that we need to compute basis for $R(\mathbf A^T)$ and, by extension, for the $N(\mathbf A)$ that we seek, is encoded in $\mathbf R_1$.

The last sentence above suggests that the problem of finding basis for $N(\mathbf A)$ can be replaced by finding an orthonormal basis for $R(\mathbf A^T)$, or equivalently, a basis for $R(\mathbf R_1^T)$. But the latter is a rather trivial problem: We know that QR decomposition can be used to solve it. Hence our second application of QR decomposition on transposed $\mathbf R_1$;

$$\mathbf R_1^T = \mathbf Q_2 \mathbf R_2.$$Once again, the QR decomposition that we apply above must be full QR decomposition.

It is clear that $$\mathbf A = \mathbf Q_1 \mathbf R_1 = \mathbf Q_1 \mathbf R_2^T \mathbf Q_2^T.$$ This means that we have all the bases that we need: $\mathbf U$ equals the $\mathbf Q_1$ that emerged and $\mathbf V$ equals the $\mathbf Q_2$ that emerged. This process is summarized in the picture below.

Reading through this article must be helpful for educative purposes. As we saw, several concepts come together when we want to compute basis for nullspace. We

- Introduced two ways to compute the nullspace

- How to operationalize URV decomposition

- URV decomposition is not unique

- A small comparison between SVD and QR

- Did a refresher on row equivalence

- Discussed the strength of the orthogonal decomposition theorem (i.e., problem of finding basis for nullspace of A is replaced by finding basis for range of A^T).

As a final step to solidify all we learned or went through, it is helpful to look at an actual computational example in this Jupyter notebok.